Debug Your AI Product: Private Team Workshop

Hamel Husain

ML Engineer with 20+ years experience

Shreya Shankar

ML Systems Researcher

Find and prioritize your AI's biggest failures in 2 days — using your own data.

This isn't a course — we roll up our sleeves and work on your product with your data.

You bring your real traces and interaction logs. Over 2 days, we systematically find where your AI is breaking down, why users are losing trust, and what to fix first.

Most teams building AI products are stuck in the same loop: ship a change, hope it helps, manually spot-check a few outputs, repeat. Meanwhile, users hit failures nobody on the team has even seen.

In this private workshop, we break that cycle. You bring real traces and interaction logs from your product. Together, we systematically uncover where your AI is breaking down, build a catalog of failure modes specific to your use case, and leave with a prioritized plan to fix what matters most.

This methodology has been refined across 4,000+ practitioners from 500+ companies, and is part of what we teach in our full-length course. It's the highest-ROI activity in AI product development.

Each workshop can accommodate a team of up to 12 participants. We can help you identify which team members to bring.

Below is a sample agenda, this can be customized to your needs and schedule.

What you’ll learn

Walk away knowing exactly where your AI product is failing, why, and what to fix first — with a concrete plan your team can execute immediately.

Import and slice your real traces to expose failure modes your team has never seen

Build a structured catalog of exactly how and where your AI breaks down

Rank failures by business impact and effort so your team knows exactly what to tackle first

Leave with a 30-60 day implementation plan tied to your product metrics

Build automated checks and evaluation metrics tailored to your specific failure modes

Set up feedback loops so your team keeps improving after the workshop ends

Workshop agenda

Discovery: Import and explore your data (Day 1)

Import your real traces and interaction logs. We'll slice the data together, review representative samples, and start spotting patterns in how your AI responds to different inputs.

Map failure patterns in your product (Day 2)

Systematically review your traces to identify and catalog every way your AI fails. Build a taxonomy of failure modes specific to your product and quantify their frequency and impact.

Prioritize fixes and build your roadmap (Day 2)

Rank failure modes by business impact and effort to fix. Build a concrete "fix first / fix next / don't bother" roadmap tied to your product metrics with clear owners and timelines.

Learn directly from Hamel & Shreya

Hamel Husain

Trained 4,000+ AI practitioners from 500+ companies on AI evaluation.

Shreya Shankar

ML Systems Researcher Making AI Evaluation Work in Practice

Who this workshop is for

Engineering teams with a live AI product that has real users.

Product managers responsible for AI features who need a data-driven improvement plan instead of guessing which changes will move the needle.

Technical leaders who want to stop relying on manual QA and spot-checks, and instead build a systematic approach to improving AI quality.

Prerequisites

A live AI product or feature with real users

This workshop is hands-on with your data. You need a deployed product generating real interactions we can analyze together.

Access to recent traces or interaction logs

We work directly with your production data. You'll receive a prep guide with instructions on what to collect and how to format it.

A dedicated team of 3+ participants

Engineers, PMs, and domain experts who know the product. The best results come from cross-functional teams who can act on findings immediately.

What's included

Live sessions

Learn directly from Hamel Husain & Shreya Shankar in a real-time, interactive format.

2-day deep dive on your production stack

I investigate your real system end to end: data flows, prompts, evals, and guardrails. We reproduce real failures so every recommendation is grounded in what your users actually see.

Ranked failure list with real user traces

We surface your highest-impact failure modes, each tied to concrete examples from your own logs. You leave with a ranked backlog of issues actually hurting trust, revenue, or support load right now.

Executive debrief and written findings

We end with a focused exec readout plus a written report summarizing risks, wins, and next steps — so leadership can make resourcing decisions without the full technical deep dive.

Maven Guarantee

Your purchase is backed by the Maven Guarantee.

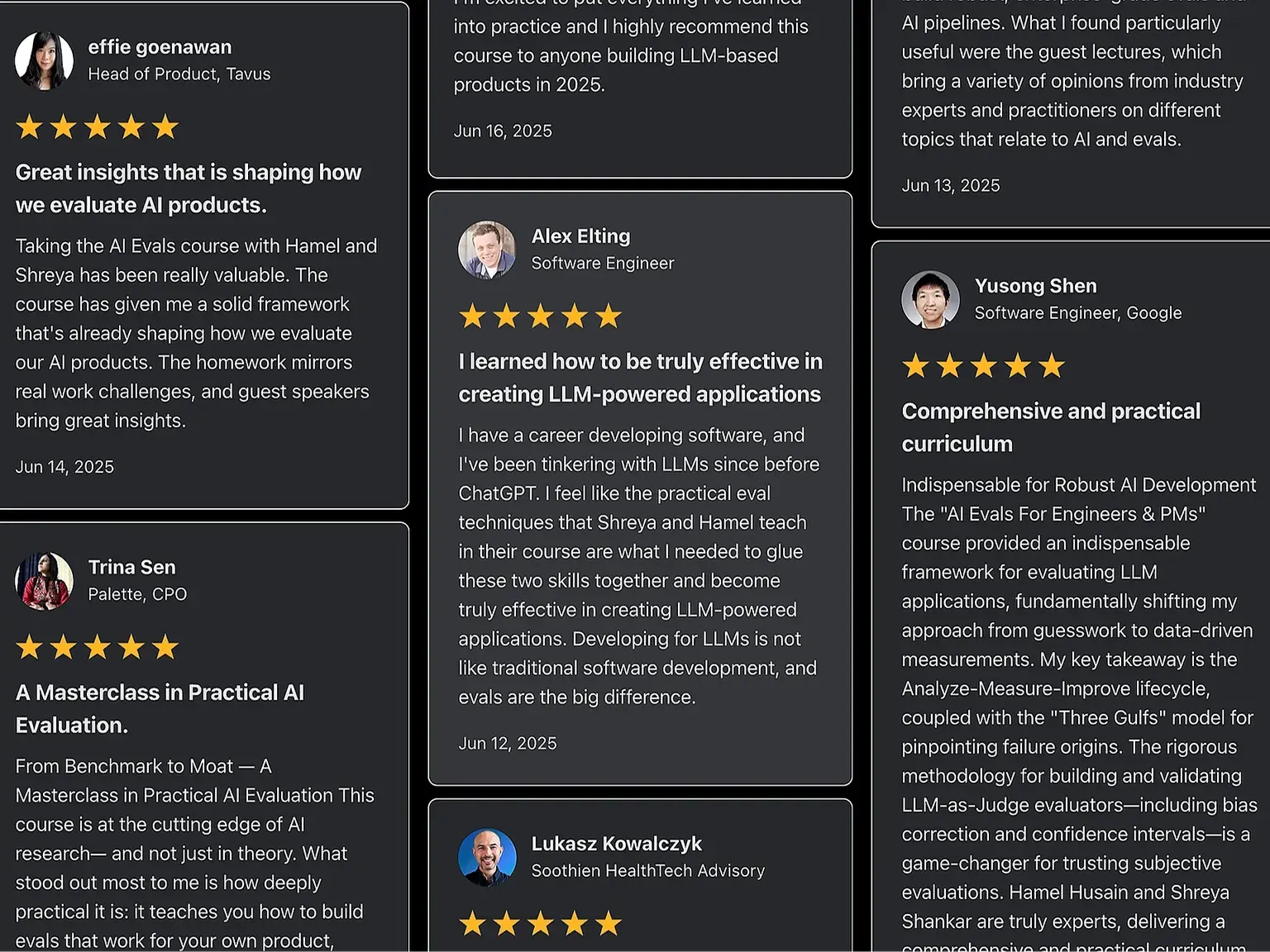

See what our students have to say (reviews from our full course).

See more reviews at bit.ly/eval-reviews

Reviews from our full evals course

Frequently asked questions

Maven for Teams

Reimbursement

Get your company to pay

Everything L&D needs: email template, receipts, and certificate of completion.

Get reimbursedTeam discount

Learn with your teammates

Save 20%+ when 2 or more teammates enroll in the same cohort.

Save 20%+ with a teamPrivate cohort

Run a cohort for your org

A dedicated cohort with a custom schedule and curriculum, tailored to your team.

Book a private cohort$13,500

USD

2 cohorts