Collaborative AI Evals with Human Feedback

In this video

What you'll learn

Why collaboration is essential to AI evals

Why running evals is a team sport. PMs, data scientists, and SMEs all need a seat at the table, not just the engineers.

Human feedback loops before scaling LLM-as-a-judge

Collecting and calibrating human annotations before handing off judgment to automated evaluators at scale.

Design evals and simulations for non-technical teammates

Create, review, & iterate on evals without needing to write code or understand technical details

Real-world customer stories from Langwatch

Hear customer stories from LangWatch on why cross-functional feedback is key to successful evals

Why this topic matters

Most teams automate their evals too early. Human feedback loops are necessary in order to make automated evals scores trustworthy. When developers, PMs, and SMEs can't easily collaborate on evals, teams end up optimizing for metrics that don't reflect real product quality. This session shows you how to bridge that gap with practical workflows and a live demo of tools designed for the whole team.

You'll learn from

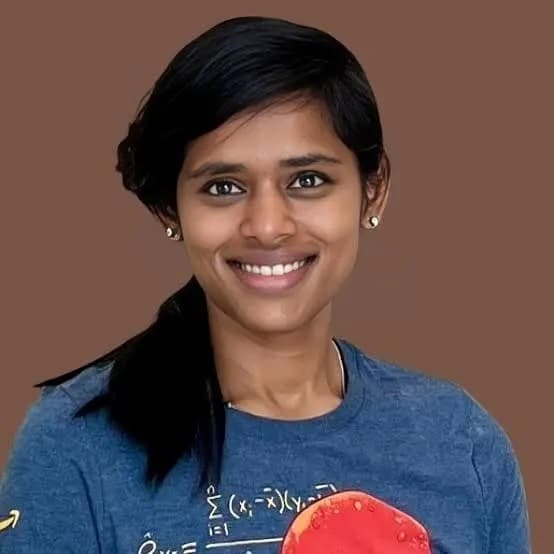

Rogério Chaves

CTO & Cofounder at Langwatch

Rogério Chaves is CTO and cofoudner of LangWatch. He is an expert in LLM evaluation, agent testing, and LLMOps. He created the Agent Testing Pyramid, which covers unit tests for AI evals, probabilistic evals, and end-to-end agent simulation.

Go deeper with a course

AI Evals and Analytics Playbook

Stella Liu and Amy Chen

Head of AI Applied Science. Cofounder at AI Evals & Analytics

.png&w=1536&q=75)