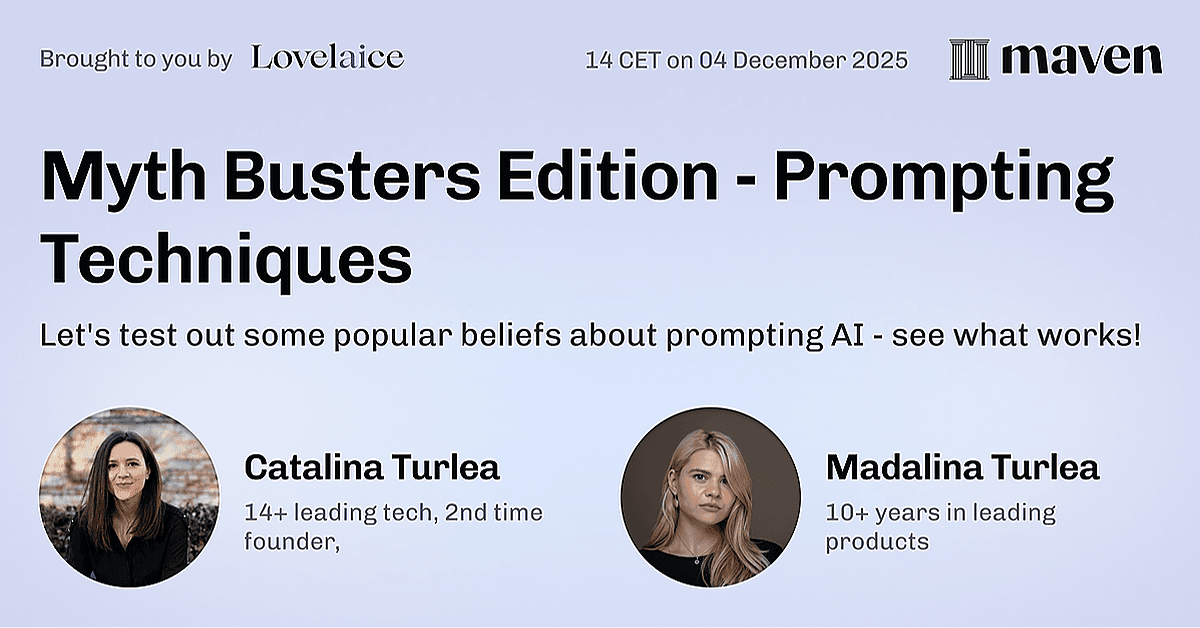

AI Myth Busters: Testing Viral Prompting Hacks Live

Hosted by Catalina Turlea and Madalina Turlea

In this video

What you'll learn

Let's test out some popular beliefs about prompting AI

Design your own experiments to test prompting claims

Save hours of trial-and-error

Why this topic matters

Leave with a playbook and data about all of these myths.

You'll learn from

Catalina Turlea

Founder @Lovelaice

I bring over 14 years of software development expertise and a decade of startup experience to help teams build AI products that actually work. After founding my first company six years ago, I run a consultancy specializing in helping startups build MVPs, solve complex technical challenges, and integrate AI effectively.

I've seen firsthand how AI projects fail due to lack of systematic experimentation—teams treat AI like traditional software and struggle with inconsistent results. That's why I co-created Lovelace, a platform designed for non-technical professionals to experiment with AI agents systematically.

Madalina Turlea

Co-founder @Lovelaice, 10+ years in Product

I'm co-founder of Lovelaice and a product leader with 10+ years building products across fintech, payments, and compliance. I hold a CFA charter and have led AI product development in highly regulated environments — where AI failures aren't just embarrassing, they're liabilities.

I've watched smart teams make the same mistakes: choosing models based on benchmarks that don't reflect their use case, writing prompts that work in testing but fail in production, and leaving domain experts out of the loop. These aren't edge cases — they're why 80% of AI projects underperform.

Through these failures (my own included), I developed a systematic approach to AI experimentation that puts domain expertise at the center. I teach what I've learned building Lovelaice: how to test, evaluate, and iterate on AI — before it reaches your users.

Go deeper with a course