AI Evals Certification Course for Engineers & Product Managers

Vivian Aranha

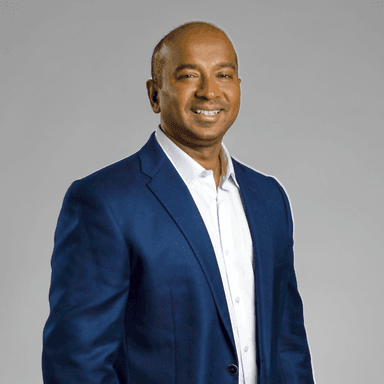

Founder & AI Instructor at School of AI

This course is popular

5 people enrolled last week.

AI Evals for Engineers & PMs: Measure, Improve, and Ship Reliable AI

Learn on your schedule with this self-paced course featuring pre-recorded sessions. Enjoy lifetime access, so you can revisit and reinforce your learning whenever needed.

AI products are fundamentally different from traditional software. They are probabilistic, non-deterministic, and constantly evolving as models, prompts, and data change. Because of this, traditional testing methods like unit tests and QA checklists are no longer enough.

Teams building AI systems—whether chatbots, RAG systems, copilots, or autonomous agents—often struggle with a critical question: How do we know if our AI system is actually improving or silently getting worse?

This course teaches engineers and product managers how to design modern AI evaluation systems that measure quality, detect regressions, and guide product decisions. You will learn how to evaluate LLM outputs, score AI behavior using structured rubrics, automate evaluation pipelines, and operationalize evals in CI/CD workflows.

By the end of the course, you will understand how leading AI teams ship reliable AI products using evaluation frameworks that combine datasets, metrics, automated scoring, human feedback, and monitoring dashboards.

What you’ll learn

Build production-ready AI evaluation systems to measure LLM quality, detect regressions, and ship reliable AI products.

Identify what to evaluate in LLMs, RAG systems, and AI agents

Define measurable success criteria for AI behavior

Convert vague goals into evaluation signals

Design representative evaluation datasets

Capture edge cases and failure scenarios

Create benchmarks that reflect real user behavior

Use metrics like correctness, relevance, faithfulness, and safety

Build scoring rubrics for human and automated evaluation

Align AI metrics with product goals

Build automated evaluation pipelines

Compare models, prompts, and system versions

Detect regressions across releases

Score retrieval quality and groundedness

Evaluate tool usage and agent planning

Measure multi-step reasoning performance

Add evaluation gates to CI/CD pipelines

Build dashboards for AI quality monitoring

Use eval data to guide product decisions

Learn directly from Vivian

Vivian Aranha

AI architect with 2.2M+ learners, building enterprise-grade agentic systems

Who this course is for

AI Engineers & ML Engineers

Building LLM applications, RAG systems, or AI agents who need reliable ways to measure & improve model behavior.AI Engineers & ML Engineers

Building LLM applications, RAG systems, or AI agents who need reliable ways to measure & improve model behavior.Tech Leads & AI Startup Founders

Leading teams building AI prods who need to operationalize evaluation before deploying models to production.

What's included

Lifetime access

Go back to course content and recordings whenever you need to.

Community of peers

Stay accountable and share insights with like-minded professionals.

Certificate of completion

Share your new skills with your employer or on LinkedIn.

Production-Ready AI Evaluation Framework

Learn how to design evaluation systems used by modern AI teams to measure quality and prevent regressions.

Evaluate LLMs, RAG Systems, and AI Agents

Go beyond simple prompts and learn how to evaluate complex AI architectures and multi-step systems.

Hands-On Labs and Real Evaluation Pipelines

Build datasets, scoring systems, automated evaluators, and dashboards used in real AI products.

Automated AI Evaluation with LLM-as-Judge

Use AI to evaluate AI at scale using structured prompts, scoring rubrics, and automated pipelines.

Ship AI Products with Confidence

Learn how to use evaluation metrics to guide release decisions, model upgrades, and product strategy.

Maven Guarantee

This course is backed by the Maven Guarantee. Students are eligible for a full refund up until the halfway point of the course.

Course syllabus

32 lessons • 10 projects

Week 1

Day 1 — Foundations of AI Evaluation

Day 2 — Metrics, Scoring & Human Feedback

Day 3 — Automated LLM-Based Evaluation

Week 2

Day 4 — Evaluating RAG, Tools & Agents

Day 5 — Production Evals, Monitoring & Decision-Making

Schedule

Projects

4-6 hrs

Async content

6-8 hrs

Frequently asked questions

$20

USD

2 days left to enroll