Accelerating Innovation with A/B Testing

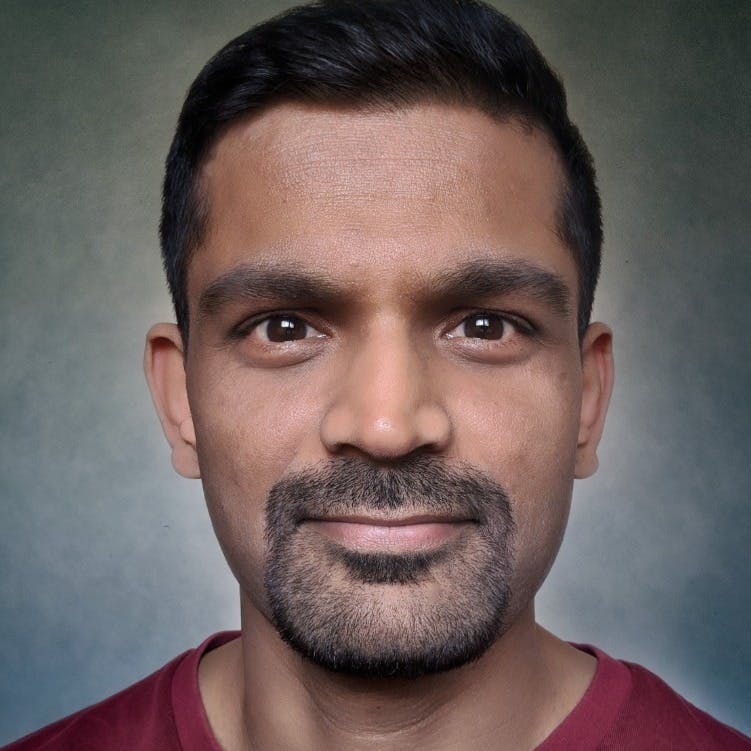

Dr. Ronny Kohavi

Author of best-selling A/B testing book

This course is popular

3 people enrolled last week.

Empower your organization to be data-driven and innovative

Through multiple real examples of well-run experiments and stories from Microsoft, Amazon, and Airbnb, you will see the humbling reality that we are terrible at assessing the value of ideas.

Trivial changes can be surprisingly useful, whereas large efforts often fail. Accelerate innovation by building Minimum Viable Products (MVPs) and features, and make the organization more evidence-based and humble as it adopts and learns to use evidence from the gold standard in science—the controlled experiment.

You will understand the challenges in designing and running trustworthy randomized controlled experiments (A/B tests), including the importance of the Overall Evaluation Criterion (OEC), scaling, common pitfalls, and Twyman's Law. We cover cultural challenges, institutional memory, maturity model, observational causal studies, offline evaluation, AI/Machine learning and triggering, build-vs-buy trade-offs, challenges, and requested topics.

The Advanced Topics course is designed as a technical follow-on: https://bit.ly/AdvancedABFS

What you’ll learn

Learn from a world-leading expert how to design and analyze trustworthy A/B tests to evaluate ideas, integrate AI/ML, and grow your business

Hear multiple real memorable stories and examples

Guess the outcome of real A/B tests

Data about industry success rate

Understand key concepts like causality and the Hierarchy of Evidence

Review the key organizational tenets required for effective experimentation.

Learn quasi-experimental techniques

Designing metrics is hard. There is a hierarchy of metrics and perverse incentives.

The most important metrics comprise the OEC - The Overall Evaluation Criterion.

See good and bad examples.

Getting numbers is easy; getting numbers you can trust is hard

Understand common pitfalls and how to design reliable and trustworthy tests.

Cultural evolution

Institutional memory, ideation, and prioritization

Offline and online evaluation

Triggering

Learn directly from Ronny

See all products from KohaviWho this course is for

Data science managers and scientists will be able to design and interpret the experimental results in a trustworthy manner

Program managers focused on growth, revenue, conversions, and prioritization will understand how to provide the org with robust clear metric

Engineering leaders will be able to make organizations more data-driven and efficient with fewer severe incidents through A/B tests

What's included

Live sessions

Learn directly from Dr. Ronny Kohavi in a real-time, interactive format.

Recordings

Go back to course content and recordings for 6+ months

Community of peers

Stay accountable and share insights with like-minded professionals. Join the LinkedIn alumni group

Certificate of completion

Share your new skills with your employer or on LinkedIn.

Course syllabus

5 live sessions • 6 lessons

Week 1

Jun

1

Session 1: 2 hours + optional 1/2-hour bonus & Q&A

Jun

2

Session 2: 2 hours + optional 1/2-hour bonus & Q&A

Jun

4

Session 3: 2 hours + optional 1/2-hour bonus & Q&A

Session 1: Introduction, interesting examples, organizational tenets

Session 2 - End-to-end example, metrics, and the OEC

Session 3: Statistics, E2E ex 2, Twyman's Law, Ideas, prioritization

Week 2

Jun

8

Session 4: 2 hours + optional 1/2-hour bonus & Q&A

Jun

11

Session 5: 2 hours + optional 1/2-hour bonus & Q&A

Session 4: Cultural challenges, maturity, observational causal studies, pitfalls

Session 5: AI/ML, triggering, leakage/interference, scaling, requested topics

Schedule

Live sessions

13 hrs

Three sessions of 2.5 hours in week 1, two sessions in week 2. Optional 1-hour follow-on session.

Mon, Jun 1

4:00 PM—6:30 PM (UTC)

Tue, Jun 2

4:00 PM—6:30 PM (UTC)

Thu, Jun 4

4:00 PM—6:30 PM (UTC)

Testimonials

- Ronny made the content amazingly easy to consume...I can't recommend it enough

Dylan Lewis

Experimentation Leader at Atlassian - If you're interested in running A/B tests and learning the science behind them, my highest recommendation is to take Ronny Kohavi's cohort-based course on the topic. (The book Ronny co-authored is tied for first on my reading list too.)

Ryan Lucht

Director of Growth Strategy, Cro Metrics - The entire course and the way it was delivered was amazing - had tons of learning, especially on where we could go wrong. Examples followed by key concepts was great. Really appreciate Ronny taking time to answer each question even after the session.

Pavan Gangisetty

Staff Data Analyst @ Intuit - The culture of Q&A during the session. The depth and width of the experimentation topic. Really eye-opening learnings from Ronny. Thank you, thank you, thank you!

Han Dong

Sr. Business Intelligence Engineer @ Credit Karma - Ronny covered similar topics that are in his book, but in a way that internalizes the points much deeper.

Scott Theisen

Experimentation Product Manager @ Ford Credit - I love that the session goes beyond the tech & stats of experimentation and also address the cultural aspects.

Sharath Bulusu

Director of Product Management @ Google

100% Customer Satisfaction Guarantee

Not satisfied after attending the first session and before session 2 starts? Get a full refund

Companies with two or more people that took the course

Top: Companies sorted by approximate market cap. A/B Vendors: sorted by alphabetical order

Frequently asked questions

Maven for Teams

Reimbursement

Get your company to pay

Everything L&D needs: email template, receipts, and certificate of completion.

Get reimbursedPrivate cohort

Run a cohort for your org

A dedicated cohort with a custom schedule and curriculum, tailored to your team.

Book a private cohort